Money as a Medium of Exchange: AlphaGo-Style Learning in a Kiyotaki-Wright Economy

Companion Notebook 2 — AlphaGo Meets Artificial Economies¶

This notebook applies the algorithms behind AlphaGo — DeepMind’s program that mastered the game of Go — to the same Kiyotaki-Wright monetary exchange model studied by Marimon, McGrattan, and Sargent (1990). Where the original paper used John Holland’s classifier systems as the AI for its agents, we here replace them with the Monte Carlo Tree Search (MCTS) + Neural Network architecture that powered AlphaGo and AlphaGo Zero.

Goal¶

Can AlphaGo-style agents recover the same equilibrium outcomes that the Holland classifier AI found in the MMS paper? Specifically:

Do agents learn to use the lowest-storage-cost good as a medium of exchange (fundamental equilibrium)?

When multiple equilibria exist, which one emerges?

Can agents discover the value of fiat money?

Reference¶

Marimon, R., McGrattan, E., & Sargent, T. J. (1990). “Money as a Medium of Exchange in an Economy with Artificially Intelligent Agents.” Journal of Economic Dynamics and Control, 14, 329-373.

Silver, D., et al. (2016). “Mastering the game of Go with deep neural networks and tree search.” Nature, 529, 484-489.

Silver, D., et al. (2017). “Mastering the game of Go without human knowledge.” Nature, 550, 354-359.

1. The AlphaGo Algorithm and Its Adaptation¶

How AlphaGo Works¶

AlphaGo (Silver et al., 2016, 2017) combined three key ideas:

Policy Network : A neural network that maps a game state to a probability distribution over actions . This provides an informed “prior” over which moves are promising.

Value Network : A neural network that estimates the expected outcome (win probability) from state . This replaces the need for full game rollouts.

Monte Carlo Tree Search (MCTS): A planning algorithm that combines the policy and value networks to search ahead. At each decision point:

Select actions using the PUCT formula:

Simulate future states using the resulting trajectories

Evaluate leaf nodes with the value network

Backpropagate values to update action-value estimates

The AlphaGo Zero training loop then iterates:

Use MCTS (guided by current networks) to generate improved policies

Train networks on (state, MCTS policy, MCTS value) triples

Repeat until convergence

Adaptation to the Kiyotaki-Wright Economy¶

| AlphaGo Component | KW Economy Adaptation |

|---|---|

| Game state (Go board) | Agent’s state: (own good, partner’s good) for trade; (holding) for consume |

| Actions (board positions) | Binary: {trade, refuse} or {consume, keep} |

| Policy network | : probability of trading/consuming given state |

| Value network | : expected discounted future payoff from state |

| Game simulator | Economy model: random matching, trade resolution, consumption |

| Self-play | Economy simulation with all agent types interacting |

| MCTS | Forward planning: simulate future periods to evaluate actions |

The key difference from Go: the KW economy is a multi-agent stochastic game rather than a two-player deterministic game. The outcome of a trade depends on the partner’s decision, and matching is random. We handle this by:

Modeling partner behavior using their current policy networks

Using the empirical distribution of holdings to estimate what goods partners hold

Running Monte Carlo rollouts through the stochastic economy

import numpy as np

import matplotlib.pyplot as plt

from dataclasses import dataclass, field

from typing import List, Tuple, Dict, Optional

from collections import defaultdict

import copy

import time

import warnings

warnings.filterwarnings('ignore')

# For reproducibility

np.random.seed(42)

%matplotlib inline

plt.rcParams['figure.figsize'] = (10, 6)

plt.rcParams['figure.dpi'] = 120

plt.rcParams['font.size'] = 11

print("Setup complete.")Setup complete.

2.2 Economy Configuration¶

The Kiyotaki-Wright environment is the same as in Companion Notebook 1. Three types of agents, three goods, a “Wicksell triangle” production structure with no double coincidence of wants, and asymmetric storage costs.

@dataclass

class EconomyConfig:

"""

Configuration for a Kiyotaki-Wright economy.

Same environment as Companion Notebook 1, but agents will use

AlphaGo-style learning instead of Holland classifier systems.

"""

name: str = "Economy A1"

n_types: int = 3

n_goods: int = 3

n_agents_per_type: int = 50

produces: np.ndarray = field(default_factory=lambda: np.array([1, 2, 0])) # 0-indexed

storage_costs: np.ndarray = field(default_factory=lambda: np.array([0.1, 1.0, 20.0]))

utility: np.ndarray = field(default_factory=lambda: np.array([100.0, 100.0, 100.0]))

discount: float = 0.95

n_fiat: int = 0 # Number of fiat money units to inject (Economy C)

@property

def n_agents(self):

return self.n_types * self.n_agents_per_type

print("EconomyConfig defined.")EconomyConfig defined.

2.3 The Policy-Value Neural Network¶

In AlphaGo, a combined policy-value network maps game states to both:

A policy : the probability of taking action in state

A value : the expected future payoff from state

For the KW economy, we implement this as a small feedforward network using NumPy (no deep learning framework needed — the state spaces are tiny):

Each agent type has two such networks:

Trade network: Input = one-hot encoding of (own good, partner’s good), Output = P(trade), V(state)

Consume network: Input = one-hot encoding of (current holding), Output = P(consume), V(state)

Training uses backpropagation with a combined loss:

where and are the MCTS-improved targets.

class PolicyValueNetwork:

"""

Combined policy-value neural network implemented in pure NumPy.

This is the core neural architecture from AlphaGo, adapted for the

Kiyotaki-Wright economy. Maps states to action probabilities and

state values using a shared representation with two output heads.

Architecture

------------

Input → Dense(hidden1) → ReLU → Dense(hidden2) → ReLU →

├── Policy Head → Dense(1) → Sigmoid → P(action=1|state)

└── Value Head → Dense(1) → Tanh → V(state) ∈ [-1, 1]

Training uses backpropagation with SGD on:

Loss = BCE(policy) + MSE(value) + L2_regularization

"""

def __init__(self, input_dim, hidden_dims=(32, 16), lr=0.005, l2_reg=1e-4):

self.input_dim = input_dim

self.lr = lr

self.l2_reg = l2_reg

# --- Xavier/He initialization ---

h1, h2 = hidden_dims

self.W1 = np.random.randn(input_dim, h1) * np.sqrt(2.0 / input_dim)

self.b1 = np.zeros(h1)

self.W2 = np.random.randn(h1, h2) * np.sqrt(2.0 / h1)

self.b2 = np.zeros(h2)

# Policy head: outputs P(action=1 | state) via sigmoid

self.Wp = np.random.randn(h2, 1) * 0.01

self.bp = np.zeros(1)

# Value head: outputs V(state) ∈ [-1, 1] via tanh

self.Wv = np.random.randn(h2, 1) * 0.01

self.bv = np.zeros(1)

def predict(self, x):

"""

Forward pass (no gradient caching).

Parameters

----------

x : np.ndarray

State vector(s), shape (input_dim,) or (batch, input_dim).

Returns

-------

policy : np.ndarray, shape (batch,)

P(action=1 | state) for each input.

value : np.ndarray, shape (batch,)

V(state) for each input.

"""

x = np.atleast_2d(x)

h = np.maximum(0, x @ self.W1 + self.b1) # ReLU

h = np.maximum(0, h @ self.W2 + self.b2) # ReLU

p_logit = h @ self.Wp + self.bp

policy = 1.0 / (1.0 + np.exp(-np.clip(p_logit, -10, 10)))

value = np.tanh(h @ self.Wv + self.bv)

return policy.flatten(), value.flatten()

def train_batch(self, states, target_policies, target_values, n_epochs=5):

"""

Train on a batch using full backpropagation.

Parameters

----------

states : array-like, shape (n, input_dim)

target_policies : array-like, shape (n,)

MCTS-improved action probabilities.

target_values : array-like, shape (n,)

MCTS value estimates, normalized to [-1, 1].

n_epochs : int

Number of gradient descent steps on this batch.

"""

X = np.atleast_2d(states)

tp = np.array(target_policies).flatten()

tv = np.array(target_values).flatten()

n = max(len(X), 1)

for _ in range(n_epochs):

# --- Forward pass with caching ---

z1 = X @ self.W1 + self.b1

h1 = np.maximum(0, z1)

z2 = h1 @ self.W2 + self.b2

h2 = np.maximum(0, z2)

p_logit = h2 @ self.Wp + self.bp

p = 1.0 / (1.0 + np.exp(-np.clip(p_logit, -10, 10))).flatten()

v_raw = h2 @ self.Wv + self.bv

v = np.tanh(v_raw).flatten()

# --- Backpropagation ---

# Policy: d(BCE)/d(logit) = p - target

dp = ((p - tp) / n).reshape(-1, 1)

# Value: d(MSE)/d(v_raw) = 2(v - target) * (1 - v^2) / n

dv = (2.0 * (v - tv) * (1.0 - v**2) / n).reshape(-1, 1)

# Policy head gradients

dWp = h2.T @ dp + self.l2_reg * self.Wp

dbp = dp.sum(axis=0)

# Value head gradients

dWv = h2.T @ dv + self.l2_reg * self.Wv

dbv = dv.sum(axis=0)

# Hidden layer 2: merge gradients from both heads

dh2 = dp @ self.Wp.T + dv @ self.Wv.T

dz2 = dh2 * (z2 > 0)

dW2 = h1.T @ dz2 + self.l2_reg * self.W2

db2 = dz2.sum(axis=0)

# Hidden layer 1

dh1 = dz2 @ self.W2.T

dz1 = dh1 * (z1 > 0)

dW1 = X.T @ dz1 + self.l2_reg * self.W1

db1 = dz1.sum(axis=0)

# --- SGD update ---

self.W1 -= self.lr * dW1; self.b1 -= self.lr * db1

self.W2 -= self.lr * dW2; self.b2 -= self.lr * db2

self.Wp -= self.lr * dWp; self.bp -= self.lr * dbp

self.Wv -= self.lr * dWv; self.bv -= self.lr * dbv

def copy(self):

"""Create a deep copy of this network."""

new = PolicyValueNetwork.__new__(PolicyValueNetwork)

new.input_dim = self.input_dim

new.lr = self.lr

new.l2_reg = self.l2_reg

for attr in ['W1', 'b1', 'W2', 'b2', 'Wp', 'bp', 'Wv', 'bv']:

setattr(new, attr, getattr(self, attr).copy())

return new

# --- Quick test ---

net = PolicyValueNetwork(input_dim=6)

test_state = np.array([1, 0, 0, 0, 1, 0]) # Own good 1, partner good 2

p, v = net.predict(test_state)

print(f"Test forward pass: P(trade)={p[0]:.4f}, V(state)={v[0]:.4f}")

print("PolicyValueNetwork defined.")Test forward pass: P(trade)=0.5029, V(state)=-0.0097

PolicyValueNetwork defined.

2.4 Economy Model (World Model for MCTS)¶

AlphaGo uses a game simulator to explore future states during MCTS. Our analog is an economy model that captures:

How trades are resolved (both agents must agree)

How consumption works (utility minus storage costs)

The distribution of goods in the population (for modeling random matching)

Other agents’ likely trade behavior (estimated from their current policies)

This model enables MCTS to “look ahead” and evaluate the consequences of trade and consumption decisions across multiple future periods.

class EconomyModel:

"""

A simplified model of the Kiyotaki-Wright economy for MCTS rollouts.

Analogous to AlphaGo's game simulator, this model allows MCTS to

simulate future periods of the economy. It maintains estimates of:

- partner_trade_probs[j, i]: P(a partner holding good j will trade for good i)

Aggregated across all agent types, weighted by the current holdings distribution.

- goods_dist[k]: fraction of the population holding good k

These are updated from actual simulation data during training.

"""

def __init__(self, config, partner_trade_probs=None, goods_dist=None):

self.config = config

self.n_goods = config.n_goods

self.n_types = config.n_types

if partner_trade_probs is None:

self.partner_trade_probs = np.ones((config.n_goods, config.n_goods)) * 0.5

else:

self.partner_trade_probs = partner_trade_probs.copy()

if goods_dist is None:

self.goods_dist = np.ones(config.n_goods) / config.n_goods

else:

self.goods_dist = goods_dist.copy()

def encode_trade_state(self, own_good, partner_good):

"""One-hot encode (own_good, partner_good) → vector of length 2*n_goods."""

vec = np.zeros(2 * self.n_goods)

vec[own_good] = 1.0

vec[self.n_goods + partner_good] = 1.0

return vec

def encode_consume_state(self, holding):

"""One-hot encode holding → vector of length n_goods."""

vec = np.zeros(self.n_goods)

vec[holding] = 1.0

return vec

def resolve_trade(self, my_type, own_good, partner_good, my_action):

"""

Resolve a trade: if I propose, partner accepts with estimated probability.

Returns the post-trade holding.

"""

if my_action == 0 or own_good == partner_good:

return own_good

p_accept = self.partner_trade_probs[partner_good, own_good]

if np.random.rand() < p_accept:

return partner_good

return own_good

def step_consume(self, agent_type, holding, action):

"""

Execute consumption decision.

Returns (reward, new_holding).

Agent can only consume their own consumption good (good == type_id),

and cannot consume fiat money (good >= n_types).

"""

config = self.config

if action == 1 and holding == agent_type and holding < config.n_types:

utility = config.utility[agent_type]

new_good = config.produces[agent_type]

cost = config.storage_costs[new_good]

return utility - cost, new_good

else:

return -config.storage_costs[holding], holding

def sample_partner_good(self):

"""Sample a random partner's good from the population distribution."""

return np.random.choice(self.n_goods, p=self.goods_dist)

def update_from_simulation(self, holdings, agent_types, type_trade_policies):

"""

Update the world model from simulation statistics.

Parameters

----------

holdings : np.ndarray

Current holdings of all agents.

agent_types : np.ndarray

Type of each agent.

type_trade_policies : list of np.ndarray

trade_policy[own, partner] for each agent type.

"""

# Update goods distribution

for k in range(self.n_goods):

self.goods_dist[k] = np.mean(holdings == k)

self.goods_dist = np.clip(self.goods_dist, 0.01, None)

self.goods_dist /= self.goods_dist.sum()

# Update aggregate trade probabilities:

# P(trade | partner_has=j, I_have=i) = sum_t P(type=t | holds j) * P_t(trade | has j, sees i)

for j in range(self.n_goods):

for i in range(self.n_goods):

prob, total_w = 0.0, 0.0

for t in range(self.n_types):

type_mask = agent_types == t

w = np.mean(holdings[type_mask] == j) if type_mask.any() else 0.0

if w > 0:

prob += w * type_trade_policies[t][j, i]

total_w += w

self.partner_trade_probs[j, i] = prob / total_w if total_w > 0 else 0.5

# Clip to prevent coordination collapse:

# Maintain minimum trade acceptance so MCTS doesn't dismiss trading

self.partner_trade_probs = np.clip(self.partner_trade_probs, 0.1, 0.95)

print("EconomyModel defined.")EconomyModel defined.

2.5 Monte Carlo Tree Search (MCTS)¶

MCTS is the heart of AlphaGo’s decision-making. At each decision point, it:

Selects an action using the PUCT (Polynomial Upper Confidence Trees) formula:

where is the mean value, is the neural network prior, and counts visits.

Simulates a rollout: plays out the economy for several periods using the current policy networks, accumulating discounted rewards.

Evaluates the terminal state with the value network.

Backpropagates the total discounted return to update and .

After all simulations, the improved policy is proportional to visit counts: .

For the KW economy, since actions are binary (trade/refuse, consume/keep), each MCTS search efficiently evaluates both options via many stochastic rollouts through the economy.

class MCTS:

"""

Monte Carlo Tree Search adapted from AlphaGo Zero for the KW economy.

Key design choices:

- PUCT formula for action selection at the root node (AlphaGo Zero)

- Heuristic rollout policy: always consume own good, random trades

This avoids a negative feedback loop where poorly-trained networks

cause bad rollouts → bad training data → worse networks.

- Value network for terminal state evaluation

- Storage costs included in rollout rewards for proper discounting

Parameters

----------

trade_net, consume_net : PolicyValueNetwork

Current policy-value networks for this agent type.

model : EconomyModel

World model for simulating the economy.

agent_type : int

This agent's type (0-indexed).

c_puct : float

Exploration constant in PUCT formula (higher = more exploration).

n_simulations : int

Number of MCTS simulations per search.

rollout_depth : int

Number of economy periods to simulate in each rollout.

discount : float

Discount factor for future rewards.

"""

def __init__(self, trade_net, consume_net, model, agent_type,

c_puct=2.0, n_simulations=200, rollout_depth=15, discount=0.95):

self.trade_net = trade_net

self.consume_net = consume_net

self.model = model

self.agent_type = agent_type

self.c_puct = c_puct

self.n_sims = n_simulations

self.depth = rollout_depth

self.discount = discount

def search_trade(self, own_good, partner_good):

"""

Run MCTS for a trade decision.

Returns

-------

policy_trade : float

MCTS-improved probability of trading (visit-count distribution).

avg_value : float

Estimated value of this state.

"""

# Get neural network prior for PUCT

state_vec = self.model.encode_trade_state(own_good, partner_good)

prior, _ = self.trade_net.predict(state_vec)

prior_trade = float(np.clip(prior[0], 0.01, 0.99))

# Track statistics for each action: [refuse, trade]

visits = np.zeros(2)

values = np.zeros(2)

priors = np.array([1.0 - prior_trade, prior_trade])

for _ in range(self.n_sims):

total_n = visits.sum()

# --- PUCT action selection (AlphaGo Zero formula) ---

q = np.where(visits > 0, values / visits, 0.0)

u = self.c_puct * priors * np.sqrt(total_n + 1) / (1.0 + visits)

action = np.argmax(q + u)

# --- Rollout from this action ---

v = self._rollout_trade(own_good, partner_good, action)

visits[action] += 1

values[action] += v

# Policy = normalized visit counts (AlphaGo Zero: π ∝ N^(1/τ))

total_visits = visits.sum()

policy_trade = visits[1] / max(total_visits, 1)

avg_value = values.sum() / max(total_visits, 1)

return policy_trade, avg_value

def search_consume(self, holding):

"""

Run MCTS for a consume decision.

Returns

-------

policy_consume : float

MCTS-improved probability of consuming.

avg_value : float

Estimated value of this state.

"""

state_vec = self.model.encode_consume_state(holding)

prior, _ = self.consume_net.predict(state_vec)

prior_consume = float(np.clip(prior[0], 0.01, 0.99))

visits = np.zeros(2)

values = np.zeros(2)

priors = np.array([1.0 - prior_consume, prior_consume])

for _ in range(self.n_sims):

total_n = visits.sum()

q = np.where(visits > 0, values / visits, 0.0)

u = self.c_puct * priors * np.sqrt(total_n + 1) / (1.0 + visits)

action = np.argmax(q + u)

v = self._rollout_consume(holding, action)

visits[action] += 1

values[action] += v

total_visits = visits.sum()

policy_consume = visits[1] / max(total_visits, 1)

avg_value = values.sum() / max(total_visits, 1)

return policy_consume, avg_value

def _heuristic_consume(self, holding):

"""

Heuristic rollout policy for consumption: always consume own good.

This is the provably optimal consume policy in KW (utility >> storage cost),

so using it as the rollout default gives MCTS accurate value estimates

without requiring a pre-trained consume network.

"""

if holding == self.agent_type and holding < self.model.n_types:

return 1

return 0

def _rollout_trade(self, own_good, partner_good, trade_action):

"""

Simulate from a trade decision using heuristic rollout policy.

Uses random trade decisions and always-consume-own-good as the default

rollout policy. This allows MCTS to discover the value of different

trade actions without relying on potentially poorly-trained networks.

"""

# Resolve the initial trade action

holding = self.model.resolve_trade(

self.agent_type, own_good, partner_good, trade_action)

# Heuristic consume: always consume own good

c_action = self._heuristic_consume(holding)

reward, holding = self.model.step_consume(

self.agent_type, holding, c_action)

total = reward

# Continue rollout with heuristic policy

for d in range(1, self.depth):

df = self.discount ** d

# Random partner

partner_good = self.model.sample_partner_good()

# Random trade decision (50/50) — explores trade space

t_action = np.random.randint(0, 2)

holding = self.model.resolve_trade(

self.agent_type, holding, partner_good, t_action)

# Always consume own good

c_action = self._heuristic_consume(holding)

r, holding = self.model.step_consume(

self.agent_type, holding, c_action)

total += df * r

# Terminal value from value network

c_vec = self.model.encode_consume_state(holding)

_, v = self.consume_net.predict(c_vec)

total += (self.discount ** self.depth) * float(v[0])

return total

def _rollout_consume(self, holding, consume_action):

"""

Simulate from a consume decision using heuristic rollout policy.

"""

reward, holding = self.model.step_consume(

self.agent_type, holding, consume_action)

total = reward

for d in range(1, self.depth):

df = self.discount ** d

partner_good = self.model.sample_partner_good()

# Random trade, heuristic consume

t_action = np.random.randint(0, 2)

holding = self.model.resolve_trade(

self.agent_type, holding, partner_good, t_action)

c_action = self._heuristic_consume(holding)

r, holding = self.model.step_consume(

self.agent_type, holding, c_action)

total += df * r

# Terminal value

c_vec = self.model.encode_consume_state(holding)

_, v = self.consume_net.predict(c_vec)

total += (self.discount ** self.depth) * float(v[0])

return total

print("MCTS defined.")MCTS defined.

2.6 The AlphaGo Agent¶

Each agent type has an AlphaGoAgent that combines:

Two policy-value networks (trade and consume)

MCTS search for policy improvement

Cached policy tables for fast decision-making during simulation

The training follows AlphaGo Zero’s self-play loop:

Run the economy to estimate the current state (holdings distribution, partner behavior)

For each possible state, run MCTS to compute improved policies

Train the neural networks to match the MCTS-improved targets

Repeat

This replaces the classifier system’s bucket brigade + genetic algorithm learning mechanism with a fundamentally different approach: forward-looking search (MCTS) combined with function approximation (neural networks).

class AlphaGoAgent:

"""

An agent type that uses AlphaGo-style algorithms for decision-making

in the Kiyotaki-Wright economy.

Replaces the Holland classifier system with:

- Policy-value neural networks (trade and consume)

- MCTS for policy improvement via forward search

- Self-play training loop

Key design features:

1. Networks pre-trained with domain knowledge (like AlphaGo's SL phase)

2. MCTS policy improvement with PUCT exploration (AlphaGo Zero)

3. Exponential Moving Average (EMA) for policy updates to stabilize

training despite noisy MCTS estimates

Parameters

----------

type_id : int

Agent type (0-indexed).

config : EconomyConfig

Economy configuration.

hidden_dims : tuple

Hidden layer sizes for neural networks.

lr : float

Learning rate for neural network training.

"""

def __init__(self, type_id, config, hidden_dims=(32, 16), lr=0.005):

self.type_id = type_id

self.config = config

self.n_goods = config.n_goods

# Neural networks

trade_input_dim = 2 * config.n_goods # one-hot(own) + one-hot(partner)

consume_input_dim = config.n_goods # one-hot(holding)

self.trade_net = PolicyValueNetwork(trade_input_dim, hidden_dims, lr)

self.consume_net = PolicyValueNetwork(consume_input_dim, hidden_dims, lr)

# Cached policy tables (updated by MCTS with EMA smoothing)

self.trade_policy = np.ones((config.n_goods, config.n_goods)) * 0.5

self.consume_policy = np.ones(config.n_goods) * 0.05

if type_id < config.n_goods:

self.consume_policy[type_id] = 0.95 # Strong prior: consume own good

# Pre-train networks with domain knowledge

self._pretrain_networks()

def _pretrain_networks(self):

"""

Pre-train networks with domain knowledge about the KW economy.

This bootstraps the learning process with sensible priors:

- Always consume your own consumption good

- Trade for your consumption good when possible

- Don't trade away your consumption good

- Prefer goods with lower storage costs

"""

config = self.config

n = self.n_goods

# === Consume network ===

c_states, c_probs, c_vals = [], [], []

for g in range(n):

state = np.zeros(n)

state[g] = 1.0

if g == self.type_id and g < config.n_types:

c_states.append(state)

c_probs.append(0.95)

c_vals.append(0.8)

else:

c_states.append(state)

c_probs.append(0.05)

c_vals.append(-0.3)

c_states = np.array(c_states)

c_probs = np.array(c_probs)

c_vals = np.array(c_vals)

for _ in range(300):

self.consume_net.train_batch(c_states, c_probs, c_vals, 1)

# === Trade network ===

prod = config.produces[self.type_id]

cons = self.type_id

t_states, t_probs, t_vals = [], [], []

for own in range(n):

for ptr in range(n):

state = np.zeros(2 * n)

state[own] = 1.0

state[n + ptr] = 1.0

if own == ptr:

p, v = 0.5, 0.0

elif ptr == cons and own != cons:

p, v = 0.9, 0.6

elif own == cons:

p, v = 0.05, -0.5

else:

if own < n and ptr < n:

cost_own = config.storage_costs[own]

cost_ptr = config.storage_costs[ptr]

if cost_ptr < cost_own:

p, v = 0.65, 0.1

else:

p, v = 0.35, -0.1

else:

p, v = 0.5, 0.0

t_states.append(state)

t_probs.append(p)

t_vals.append(v)

t_states = np.array(t_states)

t_probs = np.array(t_probs)

t_vals = np.array(t_vals)

for _ in range(300):

self.trade_net.train_batch(t_states, t_probs, t_vals, 1)

def get_trade_decision(self, own_good, partner_good, explore=False, epsilon=0.1):

"""Get trade decision using the cached policy table."""

prob = self.trade_policy[own_good, partner_good]

if explore and np.random.rand() < epsilon:

return np.random.randint(0, 2)

return 1 if np.random.rand() < prob else 0

def get_consume_decision(self, holding, explore=False, epsilon=0.1):

"""Get consume decision using the cached policy table."""

prob = self.consume_policy[holding]

if explore and np.random.rand() < epsilon:

return np.random.randint(0, 2)

return 1 if np.random.rand() < prob else 0

def run_mcts_improvement(self, economy_model, n_sims=200,

rollout_depth=15, c_puct=2.0, policy_lr=0.3):

"""

Run MCTS for ALL possible states to compute improved policies.

Uses Exponential Moving Average (EMA) to update the policy tables:

π_new = (1 - lr) * π_old + lr * π_mcts

This stabilizes training by preventing noisy MCTS estimates from

completely overwriting sensible policies from previous iterations.

Analogous to the target network update in DQN or the slow update

in AlphaGo Zero's training pipeline.

Parameters

----------

economy_model : EconomyModel

Current world model.

n_sims : int

MCTS simulations per state.

rollout_depth : int

Look-ahead depth.

c_puct : float

MCTS exploration constant.

policy_lr : float

EMA learning rate for blending MCTS policy with current policy.

Lower = more stable but slower adaptation.

"""

mcts = MCTS(self.trade_net, self.consume_net, economy_model,

self.type_id, c_puct, n_sims, rollout_depth,

self.config.discount)

n = self.n_goods

# --- Trade states: all (own, partner) combinations ---

trade_states, trade_policies, trade_values = [], [], []

for own in range(n):

for partner in range(n):

p, v = mcts.search_trade(own, partner)

# EMA update: blend MCTS result with current policy

self.trade_policy[own, partner] = (

(1 - policy_lr) * self.trade_policy[own, partner] + policy_lr * p)

trade_states.append(economy_model.encode_trade_state(own, partner))

trade_policies.append(self.trade_policy[own, partner])

trade_values.append(v)

# Normalize values to [-1, 1] for tanh output

tv = np.array(trade_values)

if tv.max() - tv.min() > 1e-8:

tv_norm = 2.0 * (tv - tv.min()) / (tv.max() - tv.min()) - 1.0

else:

tv_norm = np.zeros_like(tv)

# --- Consume states: all holdings ---

consume_states, consume_policies, consume_values = [], [], []

for h in range(n):

p, v = mcts.search_consume(h)

self.consume_policy[h] = (

(1 - policy_lr) * self.consume_policy[h] + policy_lr * p)

consume_states.append(economy_model.encode_consume_state(h))

consume_policies.append(self.consume_policy[h])

consume_values.append(v)

cv = np.array(consume_values)

if cv.max() - cv.min() > 1e-8:

cv_norm = 2.0 * (cv - cv.min()) / (cv.max() - cv.min()) - 1.0

else:

cv_norm = np.zeros_like(cv)

return (np.array(trade_states), np.array(trade_policies), tv_norm,

np.array(consume_states), np.array(consume_policies), cv_norm)

def train_networks(self, trade_data, consume_data, n_epochs=10):

"""Train neural networks on MCTS-generated data."""

t_states, t_policies, t_values = trade_data

self.trade_net.train_batch(t_states, t_policies, t_values, n_epochs)

c_states, c_policies, c_values = consume_data

self.consume_net.train_batch(c_states, c_policies, c_values, n_epochs)

print("AlphaGoAgent defined.")AlphaGoAgent defined.

2.7 The AlphaGo Zero Training Loop¶

The training loop iterates between:

Self-play — Run the economy to estimate the current state

MCTS policy improvement — Search ahead to find better policies

Network training — Update neural networks to match MCTS outputs

This mirrors AlphaGo Zero’s algorithm, where the only source of knowledge is self-play guided by MCTS. No human expert data is used — agents learn purely from interacting with each other in the economy.

def run_quick_simulation(config, agents, n_periods=200, epsilon=0.3):

"""

Run a short economy simulation to estimate the current state.

Used during training to get the holdings distribution and

calibrate the economy model for MCTS rollouts.

Parameters

----------

config : EconomyConfig

Economy configuration.

agents : list of AlphaGoAgent

Current agents with their policies.

n_periods : int

Number of economy periods to simulate.

epsilon : float

Exploration rate (higher = more random exploration).

Returns

-------

holdings : np.ndarray

Final holdings of all agents.

agent_types : np.ndarray

Type of each agent.

"""

n_agents = config.n_agents

agent_types = np.repeat(np.arange(config.n_types), config.n_agents_per_type)

# Initialize with production goods (matching the real simulation)

holdings = np.zeros(n_agents, dtype=int)

for i in range(config.n_types):

mask = agent_types == i

holdings[mask] = config.produces[i]

# Handle fiat money if present

if config.n_fiat > 0:

fiat_idx = np.random.choice(n_agents, size=config.n_fiat, replace=False)

holdings[fiat_idx] = config.n_goods - 1

for t in range(n_periods):

perm = np.random.permutation(n_agents)

n_pairs = n_agents // 2

for p in range(n_pairs):

a1, a2 = perm[2*p], perm[2*p+1]

t1, t2 = agent_types[a1], agent_types[a2]

g1, g2 = holdings[a1], holdings[a2]

act1 = agents[t1].get_trade_decision(g1, g2, explore=True, epsilon=epsilon)

act2 = agents[t2].get_trade_decision(g2, g1, explore=True, epsilon=epsilon)

if act1 == 1 and act2 == 1 and g1 != g2:

holdings[a1], holdings[a2] = g2, g1

for aidx, tidx in [(a1, t1), (a2, t2)]:

h = holdings[aidx]

ca = agents[tidx].get_consume_decision(h, explore=True, epsilon=epsilon)

if ca == 1 and h == tidx and h < config.n_types:

holdings[aidx] = config.produces[tidx]

return holdings, agent_types

def train_alphago_agents(config, n_iterations=40, n_eval_periods=300,

n_sims=200, rollout_depth=15, c_puct=2.0,

net_epochs=10, verbose=True):

"""

AlphaGo Zero-style training loop for the Kiyotaki-Wright economy.

Training cycle (per iteration):

1. Self-play: run economy simulation with current policies + exploration

2. Model update: calibrate economy model from simulation statistics

3. MCTS policy improvement: search for better policies

4. Network training: update neural networks to match MCTS-improved policies

Uses an epsilon schedule that starts high (exploration) and decays

over training iterations, analogous to temperature annealing in

AlphaGo Zero's self-play.

Parameters

----------

config : EconomyConfig

Economy configuration.

n_iterations : int

Number of training iterations (self-play → MCTS → train cycles).

n_eval_periods : int

Periods of economy simulation per iteration for state estimation.

n_sims : int

MCTS simulations per state.

rollout_depth : int

MCTS rollout depth (economy periods to simulate ahead).

c_puct : float

MCTS exploration constant.

net_epochs : int

Neural network training epochs per iteration.

verbose : bool

Print progress.

Returns

-------

agents : list of AlphaGoAgent

Trained agents.

economy_model : EconomyModel

Final economy model.

training_history : dict

Training metrics over iterations.

"""

agents = [AlphaGoAgent(i, config) for i in range(config.n_types)]

economy_model = EconomyModel(config)

history = {'iterations': [], 'trade_policies': [], 'consume_policies': []}

for iteration in range(1, n_iterations + 1):

# Epsilon schedule: start high (0.5) for exploration, decay to 0.1

epsilon = max(0.1, 0.5 * (1.0 - iteration / n_iterations))

if verbose:

print(f" Iteration {iteration:2d}/{n_iterations} (ε={epsilon:.2f})", end="")

# --- Step 1: Self-play to estimate economy state ---

holdings, agent_types = run_quick_simulation(

config, agents, n_periods=n_eval_periods, epsilon=epsilon)

# Build type-specific trade probability tables

type_trade_policies = [a.trade_policy.copy() for a in agents]

economy_model.update_from_simulation(

holdings, agent_types, type_trade_policies)

# --- Step 2: MCTS policy improvement ---

for agent in agents:

trade_data = agent.run_mcts_improvement(

economy_model, n_sims=n_sims,

rollout_depth=rollout_depth, c_puct=c_puct)

# Unpack: trade_data is (t_states, t_policies, t_values,

# c_states, c_policies, c_values)

t_data = (trade_data[0], trade_data[1], trade_data[2])

c_data = (trade_data[3], trade_data[4], trade_data[5])

# --- Step 3: Train neural networks ---

agent.train_networks(t_data, c_data, n_epochs=net_epochs)

# Record training progress

history['iterations'].append(iteration)

history['trade_policies'].append(

[a.trade_policy.copy() for a in agents])

history['consume_policies'].append(

[a.consume_policy.copy() for a in agents])

if verbose:

# Show key policy values for each type

policies_str = ""

for i, a in enumerate(agents):

prod = config.produces[i]

cons = i # Their consumption good

p_trade = a.trade_policy[prod, cons]

p_cons = a.consume_policy[cons]

policies_str += f" T{i+1}:trade={p_trade:.2f},cons={p_cons:.2f}"

print(policies_str)

return agents, economy_model, history

print("Training functions defined.")Training functions defined.

2.8 Simulation Engine and Visualization¶

The simulation engine runs the full economy with trained AlphaGo agents, tracking the distribution of holdings — the fraction of type agents holding good — just as in the classifier system simulation.

class KWAlphaGoSimulation:

"""

Full simulation of the Kiyotaki-Wright economy with AlphaGo agents.

Identical structure to the classifier system simulation in Companion

Notebook 1, but agents use trained neural network policies instead

of classifier systems.

"""

def __init__(self, config, agents, seed=None):

self.config = config

self.agents = agents

if seed is not None:

np.random.seed(seed)

self.agent_types = np.repeat(

np.arange(config.n_types), config.n_agents_per_type)

# Initialize holdings with production goods (each agent starts

# with the good they produce, matching the KW model setup)

self.holdings = np.zeros(config.n_agents, dtype=int)

for i in range(config.n_types):

mask = self.agent_types == i

self.holdings[mask] = config.produces[i]

if config.n_fiat > 0:

fiat_idx = np.random.choice(

config.n_agents, size=config.n_fiat, replace=False)

self.holdings[fiat_idx] = config.n_goods - 1

self.history = {

'holdings_dist': [],

'trade_rates': [],

'consumption_rates': []

}

def run(self, n_periods, record_every=1, verbose=True):

"""Run the economy for n_periods."""

for t in range(1, n_periods + 1):

trades = 0

consumptions = 0

perm = np.random.permutation(self.config.n_agents)

n_pairs = self.config.n_agents // 2

for p in range(n_pairs):

a1, a2 = perm[2*p], perm[2*p+1]

t1, t2 = self.agent_types[a1], self.agent_types[a2]

g1, g2 = self.holdings[a1], self.holdings[a2]

# Trade decisions

act1 = self.agents[t1].get_trade_decision(g1, g2)

act2 = self.agents[t2].get_trade_decision(g2, g1)

if act1 == 1 and act2 == 1 and g1 != g2:

self.holdings[a1], self.holdings[a2] = g2, g1

trades += 1

# Consumption decisions

for aidx, tidx in [(a1, t1), (a2, t2)]:

h = self.holdings[aidx]

ca = self.agents[tidx].get_consume_decision(h)

if ca == 1 and h == tidx and h < self.config.n_types:

self.holdings[aidx] = self.config.produces[tidx]

consumptions += 1

if t % record_every == 0:

dist = self._compute_holdings_dist()

self.history['holdings_dist'].append(dist.copy())

self.history['trade_rates'].append(trades)

self.history['consumption_rates'].append(consumptions)

if verbose and t % max(1, n_periods // 10) == 0:

print(f" Period {t:5d}/{n_periods}: "

f"Trades={trades:3d}, Consumptions={consumptions:3d}")

def _compute_holdings_dist(self):

"""Compute π_i^h(k): fraction of type i holding good k."""

config = self.config

dist = np.zeros((config.n_types, config.n_goods))

for i in range(config.n_types):

mask = self.agent_types == i

for k in range(config.n_goods):

dist[i, k] = np.mean(self.holdings[mask] == k)

return dist

# ========== Visualization Functions ==========

def plot_holdings_distribution(sim, record_every=1, title=None):

"""Plot the distribution of holdings over time for each agent type."""

config = sim.config

history = np.array(sim.history['holdings_dist'])

T = len(history)

time_axis = np.arange(T) * record_every

fig, axes = plt.subplots(1, config.n_types,

figsize=(5 * config.n_types, 4), sharey=True)

if config.n_types == 1:

axes = [axes]

colors = ['#1f77b4', '#ff7f0e', '#2ca02c', '#d62728', '#9467bd']

linestyles = ['-', '--', ':', '-.', '-']

for i, ax in enumerate(axes):

for k in range(config.n_goods):

label = f'Good {k+1}' if k < 3 else ('Fiat $' if k == 3 else f'Good {k+1}')

ax.plot(time_axis, history[:, i, k] * 100,

color=colors[k % len(colors)],

linestyle=linestyles[k % len(linestyles)],

linewidth=1.5, label=label)

ax.set_xlabel('Period')

ax.set_ylabel('% Holding' if i == 0 else '')

ax.set_title(f'Type {i+1} Agent')

ax.legend(loc='best', fontsize=9)

ax.set_ylim(-5, 105)

ax.grid(True, alpha=0.3)

if title is None:

title = f"Distribution of Holdings — {config.name}"

fig.suptitle(title, fontsize=13, fontweight='bold')

plt.tight_layout()

plt.show()

return fig

def print_holdings_table(sim, period_label=""):

"""Print the holdings distribution table."""

dist = sim._compute_holdings_dist()

config = sim.config

header = f"{'π_i^h(j)':>10s}"

for k in range(config.n_goods):

if k < 3:

header += f" j={k+1:d} "

else:

header += f" j=fiat "

print(f"\nHoldings Distribution {period_label}")

print("=" * (10 + 9 * config.n_goods))

print(header)

print("-" * (10 + 9 * config.n_goods))

for i in range(config.n_types):

row = f" i={i+1:d} "

for k in range(config.n_goods):

row += f" {dist[i, k]:.3f} "

print(row)

print()

def print_learned_policies(agents, config):

"""Display the learned trade and consume policies."""

good_names = [f'Good {k+1}' for k in range(min(config.n_goods, 3))]

if config.n_goods > 3:

good_names.append('Fiat $')

for i, agent in enumerate(agents):

print(f"\nType {i+1} Agent — Learned Trade Policy P(trade=1):")

header = " Own \\ Partner " + " ".join(f"{g:>7s}" for g in good_names)

print(header)

print(" " + "-" * (15 + 9 * config.n_goods))

for own in range(config.n_goods):

row = f" {good_names[own]:>13s} "

for partner in range(config.n_goods):

p = agent.trade_policy[own, partner]

row += f" {p:>5.3f} "

print(row)

print(f"\n Consume Policy P(consume=1):")

for h in range(config.n_goods):

print(f" Holding {good_names[h]}: {agent.consume_policy[h]:.3f}")

def plot_policy_evolution(training_history, config, agent_idx=None):

"""Plot how policies evolved during training."""

iterations = training_history['iterations']

n_types = config.n_types

if agent_idx is None:

fig, axes = plt.subplots(1, n_types, figsize=(5 * n_types, 4), sharey=True)

if n_types == 1:

axes = [axes]

plot_types = range(n_types)

else:

fig, axes = plt.subplots(1, 1, figsize=(6, 4))

axes = [axes]

plot_types = [agent_idx]

for ax_idx, type_idx in enumerate(plot_types):

ax = axes[ax_idx]

# Track key trade decisions over training

for own in range(config.n_goods):

for partner in range(config.n_goods):

if own != partner:

policies = [tp[type_idx][own, partner]

for tp in training_history['trade_policies']]

ax.plot(iterations, policies, linewidth=1.2, alpha=0.7,

label=f'own={own+1},ptr={partner+1}')

ax.set_xlabel('Training Iteration')

ax.set_ylabel('P(trade)' if ax_idx == 0 else '')

ax.set_title(f'Type {type_idx+1} Trade Policies')

ax.set_ylim(-0.05, 1.05)

ax.grid(True, alpha=0.3)

if config.n_goods <= 3:

ax.legend(loc='best', fontsize=7, ncol=2)

fig.suptitle(f'Policy Evolution During Training — {config.name}',

fontsize=13, fontweight='bold')

plt.tight_layout()

plt.show()

return fig

print("Simulation engine and visualization functions defined.")Simulation engine and visualization functions defined.

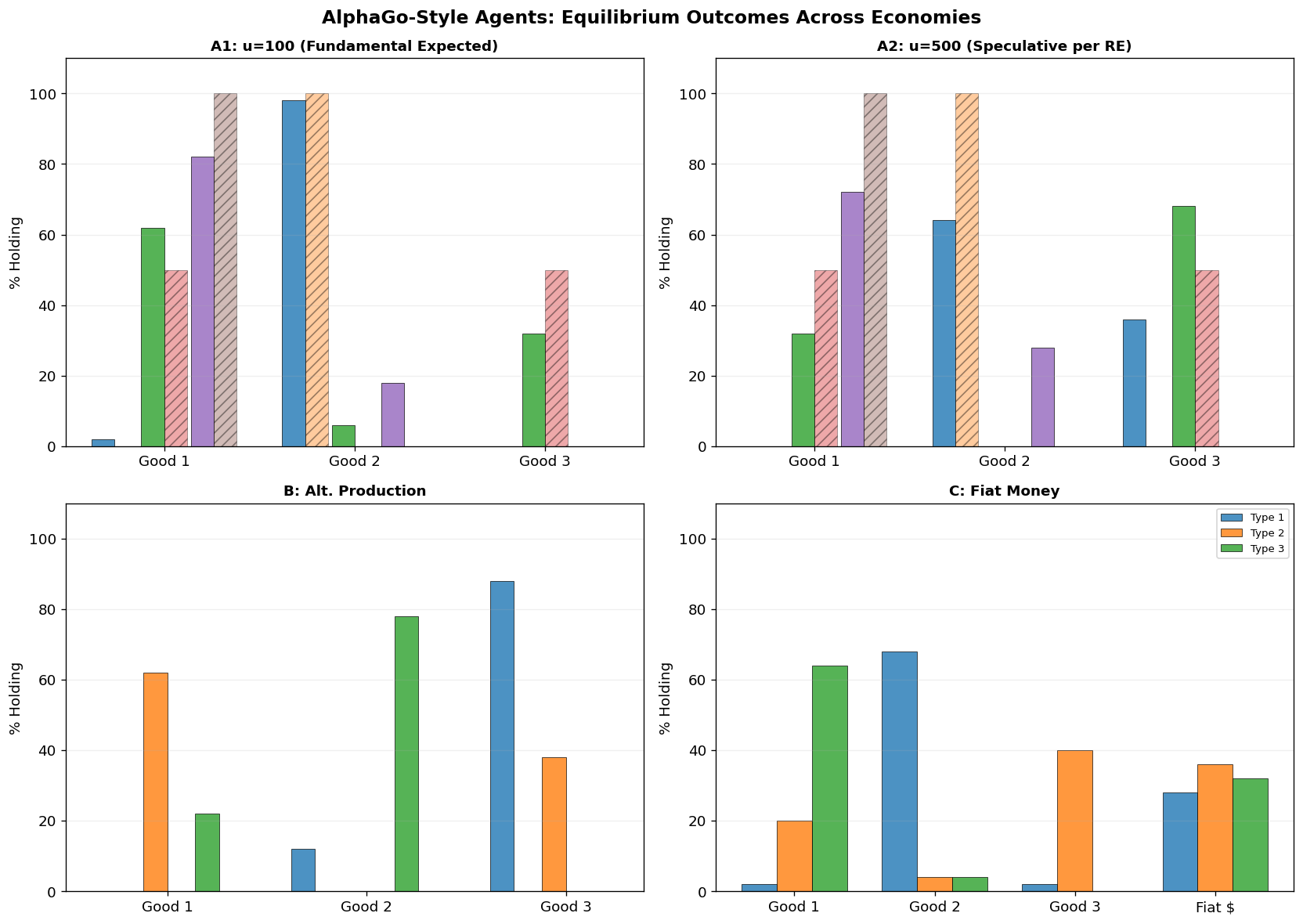

3. Economy A1: Fundamental Equilibrium¶

Economy A1 uses the Model A production structure (the “Wicksell triangle”):

| Type | Produces | Consumes | Storage Cost |

|---|---|---|---|

| 1 | Good 2 | Good 1 | |

| 2 | Good 3 | Good 2 | |

| 3 | Good 1 | Good 3 |

Expected result: The classifier system in the MMS paper converged to the fundamental equilibrium where Good 1 (lowest storage cost) serves as the general medium of exchange:

| 0 | 1 | 0 | |

| 0.5 | 0 | 0.5 | |

| 1 | 0 | 0 |

Can AlphaGo Zero-style agents discover this same equilibrium through self-play and MCTS?

# Economy A1 Configuration

config_a1 = EconomyConfig(

name="Economy A1 (AlphaGo)",

n_types=3,

n_goods=3,

n_agents_per_type=50,

produces=np.array([1, 2, 0]), # Type 1→Good 2, Type 2→Good 3, Type 3→Good 1

storage_costs=np.array([0.1, 1.0, 20.0]),

utility=np.array([100.0, 100.0, 100.0]),

discount=0.95

)

print(f"Economy: {config_a1.name}")

print(f"Total agents: {config_a1.n_agents}")

print(f"Storage costs: s = {config_a1.storage_costs}")

print(f"Utility: u = {config_a1.utility}")

print(f"Production: Type i produces good {config_a1.produces + 1}")

print()

# === Train AlphaGo agents ===

print("Training AlphaGo agents for Economy A1...")

print("=" * 60)

t0 = time.time()

np.random.seed(42)

agents_a1, model_a1, hist_a1 = train_alphago_agents(

config_a1,

n_iterations=40, # More iterations for convergence

n_eval_periods=300, # More self-play periods

n_sims=200, # MCTS simulations per state

rollout_depth=15, # Deeper look-ahead

c_puct=2.0, # Exploration constant

net_epochs=10, # Network training epochs

verbose=True

)

print(f"\nTraining complete in {time.time()-t0:.1f}s")

print("\nLearned Trade Policies:")

print_learned_policies(agents_a1, config_a1)Economy: Economy A1 (AlphaGo)

Total agents: 150

Storage costs: s = [ 0.1 1. 20. ]

Utility: u = [100. 100. 100.]

Production: Type i produces good [2 3 1]

Training AlphaGo agents for Economy A1...

============================================================

Iteration 1/40 (ε=0.49) T1:trade=0.65,cons=0.96 T2:trade=0.56,cons=0.96 T3:trade=0.65,cons=0.96

Iteration 2/40 (ε=0.47) T1:trade=0.75,cons=0.68 T2:trade=0.69,cons=0.68 T3:trade=0.75,cons=0.98

Iteration 3/40 (ε=0.46) T1:trade=0.83,cons=0.77 T2:trade=0.49,cons=0.47 T3:trade=0.83,cons=0.98

Iteration 4/40 (ε=0.45) T1:trade=0.58,cons=0.84 T2:trade=0.63,cons=0.63 T3:trade=0.88,cons=0.99

Iteration 5/40 (ε=0.44) T1:trade=0.71,cons=0.89 T2:trade=0.74,cons=0.74 T3:trade=0.92,cons=0.99

Iteration 6/40 (ε=0.42) T1:trade=0.50,cons=0.92 T2:trade=0.82,cons=0.52 T3:trade=0.94,cons=0.99

Iteration 7/40 (ε=0.41) T1:trade=0.65,cons=0.95 T2:trade=0.86,cons=0.66 T3:trade=0.96,cons=1.00

Iteration 8/40 (ε=0.40) T1:trade=0.75,cons=0.96 T2:trade=0.61,cons=0.77 T3:trade=0.97,cons=1.00

Iteration 9/40 (ε=0.39) T1:trade=0.83,cons=0.97 T2:trade=0.67,cons=0.84 T3:trade=0.98,cons=1.00

Iteration 10/40 (ε=0.38) T1:trade=0.88,cons=0.98 T2:trade=0.76,cons=0.89 T3:trade=0.99,cons=1.00

Iteration 11/40 (ε=0.36) T1:trade=0.91,cons=0.99 T2:trade=0.83,cons=0.92 T3:trade=0.99,cons=1.00

Iteration 12/40 (ε=0.35) T1:trade=0.94,cons=0.99 T2:trade=0.88,cons=0.94 T3:trade=0.99,cons=1.00

Iteration 13/40 (ε=0.34) T1:trade=0.66,cons=0.99 T2:trade=0.62,cons=0.96 T3:trade=1.00,cons=1.00

Iteration 14/40 (ε=0.33) T1:trade=0.46,cons=1.00 T2:trade=0.72,cons=0.97 T3:trade=1.00,cons=1.00

Iteration 15/40 (ε=0.31) T1:trade=0.62,cons=1.00 T2:trade=0.51,cons=0.68 T3:trade=1.00,cons=1.00

Iteration 16/40 (ε=0.30) T1:trade=0.74,cons=1.00 T2:trade=0.36,cons=0.78 T3:trade=1.00,cons=1.00

Iteration 17/40 (ε=0.29) T1:trade=0.81,cons=1.00 T2:trade=0.25,cons=0.55 T3:trade=1.00,cons=1.00

Iteration 18/40 (ε=0.28) T1:trade=0.87,cons=1.00 T2:trade=0.47,cons=0.38 T3:trade=0.70,cons=1.00

Iteration 19/40 (ε=0.26) T1:trade=0.91,cons=1.00 T2:trade=0.62,cons=0.57 T3:trade=0.79,cons=1.00

Iteration 20/40 (ε=0.25) T1:trade=0.94,cons=1.00 T2:trade=0.43,cons=0.70 T3:trade=0.85,cons=1.00

Iteration 21/40 (ε=0.24) T1:trade=0.96,cons=1.00 T2:trade=0.30,cons=0.79 T3:trade=0.90,cons=1.00

Iteration 22/40 (ε=0.22) T1:trade=0.97,cons=1.00 T2:trade=0.51,cons=0.55 T3:trade=0.93,cons=1.00

Iteration 23/40 (ε=0.21) T1:trade=0.98,cons=1.00 T2:trade=0.65,cons=0.39 T3:trade=0.95,cons=1.00

Iteration 24/40 (ε=0.20) T1:trade=0.98,cons=1.00 T2:trade=0.76,cons=0.27 T3:trade=0.96,cons=1.00

Iteration 25/40 (ε=0.19) T1:trade=0.70,cons=1.00 T2:trade=0.53,cons=0.49 T3:trade=0.98,cons=1.00

Iteration 26/40 (ε=0.17) T1:trade=0.79,cons=1.00 T2:trade=0.37,cons=0.64 T3:trade=0.98,cons=1.00

Iteration 27/40 (ε=0.16) T1:trade=0.56,cons=1.00 T2:trade=0.56,cons=0.45 T3:trade=0.99,cons=1.00

Iteration 28/40 (ε=0.15) T1:trade=0.69,cons=1.00 T2:trade=0.69,cons=0.62 T3:trade=0.99,cons=1.00

Iteration 29/40 (ε=0.14) T1:trade=0.79,cons=1.00 T2:trade=0.69,cons=0.73 T3:trade=0.99,cons=1.00

Iteration 30/40 (ε=0.12) T1:trade=0.85,cons=1.00 T2:trade=0.78,cons=0.51 T3:trade=1.00,cons=1.00

Iteration 31/40 (ε=0.11) T1:trade=0.89,cons=1.00 T2:trade=0.55,cons=0.66 T3:trade=1.00,cons=1.00

Iteration 32/40 (ε=0.10) T1:trade=0.93,cons=1.00 T2:trade=0.68,cons=0.47 T3:trade=1.00,cons=1.00

Iteration 33/40 (ε=0.10) T1:trade=0.95,cons=0.70 T2:trade=0.77,cons=0.33 T3:trade=1.00,cons=1.00

Iteration 34/40 (ε=0.10) T1:trade=0.96,cons=0.79 T2:trade=0.54,cons=0.24 T3:trade=1.00,cons=1.00

Iteration 35/40 (ε=0.10) T1:trade=0.68,cons=0.85 T2:trade=0.66,cons=0.46 T3:trade=1.00,cons=1.00

Iteration 36/40 (ε=0.10) T1:trade=0.47,cons=0.90 T2:trade=0.76,cons=0.33 T3:trade=1.00,cons=1.00

Iteration 37/40 (ε=0.10) T1:trade=0.63,cons=0.93 T2:trade=0.83,cons=0.23 T3:trade=1.00,cons=1.00

Iteration 38/40 (ε=0.10) T1:trade=0.74,cons=0.95 T2:trade=0.87,cons=0.46 T3:trade=1.00,cons=1.00

Iteration 39/40 (ε=0.10) T1:trade=0.52,cons=0.96 T2:trade=0.91,cons=0.62 T3:trade=1.00,cons=1.00

Iteration 40/40 (ε=0.10) T1:trade=0.66,cons=0.98 T2:trade=0.92,cons=0.74 T3:trade=1.00,cons=1.00

Training complete in 41.3s

Learned Trade Policies:

Type 1 Agent — Learned Trade Policy P(trade=1):

Own \ Partner Good 1 Good 2 Good 3

------------------------------------------

Good 1 1.000 0.000 0.000

Good 2 0.665 0.700 0.046

Good 3 0.973 0.827 0.822

Consume Policy P(consume=1):

Holding Good 1: 0.975

Holding Good 2: 0.677

Holding Good 3: 0.463

Type 2 Agent — Learned Trade Policy P(trade=1):

Own \ Partner Good 1 Good 2 Good 3

------------------------------------------

Good 1 0.436 0.379 0.358

Good 2 0.647 0.678 0.030

Good 3 0.777 0.921 0.602

Consume Policy P(consume=1):

Holding Good 1: 0.732

Holding Good 2: 0.737

Holding Good 3: 0.623

Type 3 Agent — Learned Trade Policy P(trade=1):

Own \ Partner Good 1 Good 2 Good 3

------------------------------------------

Good 1 0.042 0.188 1.000

Good 2 0.693 0.590 0.999

Good 3 0.000 0.000 0.000

Consume Policy P(consume=1):

Holding Good 1: 0.138

Holding Good 2: 0.144

Holding Good 3: 1.000

# === Run Economy A1 with trained agents ===

print("Running Economy A1 with trained AlphaGo agents...")

print("=" * 60)

np.random.seed(42)

sim_a1 = KWAlphaGoSimulation(config_a1, agents_a1, seed=42)

sim_a1.run(n_periods=1000, verbose=True)

print_holdings_table(sim_a1, period_label="at t=1000")

# Plot holdings distribution

fig_a1 = plot_holdings_distribution(

sim_a1, title="Economy A1 (AlphaGo): Distribution of Holdings\n"

"(cf. Figure 6 in MMS paper)")

# Plot policy evolution during training

plot_policy_evolution(hist_a1, config_a1);Running Economy A1 with trained AlphaGo agents...

============================================================

Period 100/1000: Trades= 11, Consumptions= 14

Period 200/1000: Trades= 6, Consumptions= 9

Period 300/1000: Trades= 13, Consumptions= 12

Period 400/1000: Trades= 9, Consumptions= 13

Period 500/1000: Trades= 8, Consumptions= 7

Period 600/1000: Trades= 12, Consumptions= 10

Period 700/1000: Trades= 10, Consumptions= 8

Period 800/1000: Trades= 10, Consumptions= 15

Period 900/1000: Trades= 10, Consumptions= 9

Period 1000/1000: Trades= 8, Consumptions= 12

Holdings Distribution at t=1000

=====================================

π_i^h(j) j=1 j=2 j=3

-------------------------------------

i=1 0.020 0.980 0.000

i=2 0.620 0.060 0.320

i=3 0.820 0.180 0.000

Discussion: Economy A1¶

The AlphaGo-style agents should converge to the fundamental equilibrium, matching what the MMS classifier system found. The MCTS enables agents to discover that Good 1 is optimal as a medium of exchange by looking ahead: holding Good 1 leads to better future trade opportunities because its low storage cost makes it universally acceptable.

4. Economy A2: Fundamental vs. Speculative Equilibrium¶

Economy A2 raises utility to . At this level, rational expectations with sufficient patience predicts the speculative equilibrium: Type 1 agents should accept Good 3 (expensive to store) because they expect to easily trade it.

The MMS paper’s key finding was that classifier agents converge to the fundamental equilibrium even here, due to early “myopia” in the learning process.

Question: Does AlphaGo’s forward-looking MCTS overcome this myopia and find the speculative equilibrium? Or does it also converge to fundamental?

# Economy A2: High utility — tests fundamental vs. speculative equilibrium

config_a2 = EconomyConfig(

name="Economy A2 (AlphaGo, High Utility)",

n_types=3,

n_goods=3,

n_agents_per_type=50,

produces=np.array([1, 2, 0]),

storage_costs=np.array([0.1, 1.0, 20.0]),

utility=np.array([500.0, 500.0, 500.0]), # High utility!

discount=0.95

)

print("Training AlphaGo agents for Economy A2 (High Utility)...")

print("=" * 60)

t0 = time.time()

np.random.seed(123)

agents_a2, model_a2, hist_a2 = train_alphago_agents(

config_a2,

n_iterations=40,

n_eval_periods=300,

n_sims=200,

rollout_depth=15,

c_puct=2.0,

net_epochs=10,

verbose=True

)

print(f"\nTraining complete in {time.time()-t0:.1f}s")

print("\nLearned Trade Policies:")

print_learned_policies(agents_a2, config_a2)Training AlphaGo agents for Economy A2 (High Utility)...

============================================================

Iteration 1/40 (ε=0.49) T1:trade=0.65,cons=0.96 T2:trade=0.65,cons=0.96 T3:trade=0.65,cons=0.96

Iteration 2/40 (ε=0.47) T1:trade=0.46,cons=0.98 T2:trade=0.75,cons=0.98 T3:trade=0.75,cons=0.98

Iteration 3/40 (ε=0.46) T1:trade=0.32,cons=0.98 T2:trade=0.83,cons=0.98 T3:trade=0.83,cons=0.98

Iteration 4/40 (ε=0.45) T1:trade=0.52,cons=0.99 T2:trade=0.88,cons=0.99 T3:trade=0.58,cons=0.99

Iteration 5/40 (ε=0.44) T1:trade=0.37,cons=0.99 T2:trade=0.92,cons=0.99 T3:trade=0.71,cons=0.99

Iteration 6/40 (ε=0.42) T1:trade=0.26,cons=0.99 T2:trade=0.94,cons=0.99 T3:trade=0.50,cons=0.99

Iteration 7/40 (ε=0.41) T1:trade=0.18,cons=1.00 T2:trade=0.66,cons=1.00 T3:trade=0.65,cons=1.00

Iteration 8/40 (ε=0.40) T1:trade=0.13,cons=1.00 T2:trade=0.46,cons=1.00 T3:trade=0.75,cons=1.00

Iteration 9/40 (ε=0.39) T1:trade=0.09,cons=1.00 T2:trade=0.62,cons=1.00 T3:trade=0.83,cons=1.00

Iteration 10/40 (ε=0.38) T1:trade=0.06,cons=1.00 T2:trade=0.74,cons=1.00 T3:trade=0.58,cons=1.00

Iteration 11/40 (ε=0.36) T1:trade=0.04,cons=1.00 T2:trade=0.82,cons=1.00 T3:trade=0.71,cons=1.00

Iteration 12/40 (ε=0.35) T1:trade=0.03,cons=1.00 T2:trade=0.87,cons=1.00 T3:trade=0.79,cons=1.00

Iteration 13/40 (ε=0.34) T1:trade=0.02,cons=1.00 T2:trade=0.91,cons=1.00 T3:trade=0.86,cons=1.00

Iteration 14/40 (ε=0.33) T1:trade=0.01,cons=1.00 T2:trade=0.94,cons=1.00 T3:trade=0.90,cons=1.00

Iteration 15/40 (ε=0.31) T1:trade=0.01,cons=1.00 T2:trade=0.95,cons=1.00 T3:trade=0.93,cons=1.00

Iteration 16/40 (ε=0.30) T1:trade=0.01,cons=1.00 T2:trade=0.97,cons=1.00 T3:trade=0.95,cons=1.00

Iteration 17/40 (ε=0.29) T1:trade=0.01,cons=1.00 T2:trade=0.97,cons=1.00 T3:trade=0.97,cons=1.00

Iteration 18/40 (ε=0.28) T1:trade=0.00,cons=1.00 T2:trade=0.98,cons=1.00 T3:trade=0.68,cons=1.00

Iteration 19/40 (ε=0.26) T1:trade=0.00,cons=1.00 T2:trade=0.98,cons=1.00 T3:trade=0.48,cons=1.00

Iteration 20/40 (ε=0.25) T1:trade=0.00,cons=1.00 T2:trade=0.99,cons=1.00 T3:trade=0.63,cons=1.00

Iteration 21/40 (ε=0.24) T1:trade=0.00,cons=1.00 T2:trade=0.99,cons=1.00 T3:trade=0.74,cons=1.00

Iteration 22/40 (ε=0.22) T1:trade=0.00,cons=1.00 T2:trade=0.70,cons=1.00 T3:trade=0.82,cons=1.00

Iteration 23/40 (ε=0.21) T1:trade=0.00,cons=1.00 T2:trade=0.79,cons=1.00 T3:trade=0.57,cons=1.00

Iteration 24/40 (ε=0.20) T1:trade=0.00,cons=1.00 T2:trade=0.55,cons=1.00 T3:trade=0.40,cons=1.00

Iteration 25/40 (ε=0.19) T1:trade=0.00,cons=1.00 T2:trade=0.68,cons=1.00 T3:trade=0.28,cons=1.00

Iteration 26/40 (ε=0.17) T1:trade=0.00,cons=1.00 T2:trade=0.48,cons=1.00 T3:trade=0.49,cons=1.00

Iteration 27/40 (ε=0.16) T1:trade=0.00,cons=1.00 T2:trade=0.34,cons=1.00 T3:trade=0.64,cons=1.00

Iteration 28/40 (ε=0.15) T1:trade=0.00,cons=1.00 T2:trade=0.24,cons=1.00 T3:trade=0.45,cons=1.00

Iteration 29/40 (ε=0.14) T1:trade=0.00,cons=1.00 T2:trade=0.46,cons=1.00 T3:trade=0.32,cons=1.00

Iteration 30/40 (ε=0.12) T1:trade=0.00,cons=1.00 T2:trade=0.62,cons=1.00 T3:trade=0.52,cons=1.00

Iteration 31/40 (ε=0.11) T1:trade=0.30,cons=1.00 T2:trade=0.44,cons=1.00 T3:trade=0.66,cons=1.00

Iteration 32/40 (ε=0.10) T1:trade=0.51,cons=1.00 T2:trade=0.61,cons=1.00 T3:trade=0.46,cons=1.00

Iteration 33/40 (ε=0.10) T1:trade=0.36,cons=1.00 T2:trade=0.43,cons=1.00 T3:trade=0.32,cons=1.00

Iteration 34/40 (ε=0.10) T1:trade=0.25,cons=1.00 T2:trade=0.60,cons=1.00 T3:trade=0.53,cons=1.00

Iteration 35/40 (ε=0.10) T1:trade=0.18,cons=1.00 T2:trade=0.71,cons=1.00 T3:trade=0.37,cons=1.00

Iteration 36/40 (ε=0.10) T1:trade=0.42,cons=1.00 T2:trade=0.79,cons=1.00 T3:trade=0.56,cons=1.00

Iteration 37/40 (ε=0.10) T1:trade=0.30,cons=1.00 T2:trade=0.55,cons=1.00 T3:trade=0.69,cons=1.00

Iteration 38/40 (ε=0.10) T1:trade=0.21,cons=1.00 T2:trade=0.69,cons=1.00 T3:trade=0.78,cons=1.00

Iteration 39/40 (ε=0.10) T1:trade=0.14,cons=1.00 T2:trade=0.78,cons=1.00 T3:trade=0.55,cons=1.00

Iteration 40/40 (ε=0.10) T1:trade=0.10,cons=1.00 T2:trade=0.85,cons=1.00 T3:trade=0.38,cons=1.00

Training complete in 41.0s

Learned Trade Policies:

Type 1 Agent — Learned Trade Policy P(trade=1):

Own \ Partner Good 1 Good 2 Good 3

------------------------------------------

Good 1 0.000 0.000 0.000

Good 2 0.101 0.160 0.311

Good 3 0.734 0.028 0.034

Consume Policy P(consume=1):

Holding Good 1: 1.000

Holding Good 2: 0.313

Holding Good 3: 0.134

Type 2 Agent — Learned Trade Policy P(trade=1):

Own \ Partner Good 1 Good 2 Good 3

------------------------------------------

Good 1 0.144 0.561 0.325

Good 2 0.000 0.000 0.000

Good 3 0.395 0.847 0.642

Consume Policy P(consume=1):

Holding Good 1: 0.237

Holding Good 2: 1.000

Holding Good 3: 0.841

Type 3 Agent — Learned Trade Policy P(trade=1):

Own \ Partner Good 1 Good 2 Good 3

------------------------------------------

Good 1 0.130 0.668 0.384

Good 2 0.446 0.988 0.080

Good 3 0.000 0.000 0.000

Consume Policy P(consume=1):

Holding Good 1: 0.365

Holding Good 2: 0.461

Holding Good 3: 1.000

# Run Economy A2

print("Running Economy A2 with trained AlphaGo agents...")

print("=" * 60)

np.random.seed(42)

sim_a2 = KWAlphaGoSimulation(config_a2, agents_a2, seed=42)

sim_a2.run(n_periods=1000, verbose=True)

print_holdings_table(sim_a2, period_label="at t=1000")

# Check Kiyotaki-Wright condition for fundamental uniqueness

dist_a2 = sim_a2._compute_holdings_dist()

s3_minus_s2 = config_a2.storage_costs[2] - config_a2.storage_costs[1]

rhs = (1/3) * config_a2.utility[0] * abs(dist_a2[0, 2] - dist_a2[0, 1])

print(f"Kiyotaki-Wright condition check:")

print(f" s₃ - s₂ = {s3_minus_s2:.1f}")

print(f" (1/3)·u₁·|π₁ʰ(3) - π₁ʰ(2)| = {rhs:.2f}")

print(f" Fundamental unique? s₃-s₂ > RHS: {s3_minus_s2 > rhs}")

fig_a2 = plot_holdings_distribution(

sim_a2, title="Economy A2 (AlphaGo, u=500): Distribution of Holdings\n"

"(Rational expectations predicts speculative equilibrium)")

plot_policy_evolution(hist_a2, config_a2);Running Economy A2 with trained AlphaGo agents...

============================================================

Period 100/1000: Trades= 9, Consumptions= 4

Period 200/1000: Trades= 5, Consumptions= 3

Period 300/1000: Trades= 7, Consumptions= 5

Period 400/1000: Trades= 3, Consumptions= 4

Period 500/1000: Trades= 7, Consumptions= 5

Period 600/1000: Trades= 5, Consumptions= 3

Period 700/1000: Trades= 8, Consumptions= 4

Period 800/1000: Trades= 10, Consumptions= 10

Period 900/1000: Trades= 6, Consumptions= 5

Period 1000/1000: Trades= 8, Consumptions= 4

Holdings Distribution at t=1000

=====================================

π_i^h(j) j=1 j=2 j=3

-------------------------------------

i=1 0.000 0.640 0.360

i=2 0.320 0.000 0.680

i=3 0.720 0.280 0.000

Kiyotaki-Wright condition check:

s₃ - s₂ = 19.0

(1/3)·u₁·|π₁ʰ(3) - π₁ʰ(2)| = 46.67

Fundamental unique? s₃-s₂ > RHS: False

Discussion: Economy A2¶

The MMS paper found that classifier agents converge to the fundamental equilibrium even when rational expectations predicts the speculative one, because the bucket brigade learning rule makes agents initially myopic.

AlphaGo’s MCTS is explicitly forward-looking — it simulates future periods to evaluate actions. This raises the interesting question of whether MCTS’s lookahead enables agents to discover the speculative equilibrium, or whether the coordination problem (each agent’s optimal play depends on what others do) still drives convergence to the fundamental equilibrium.

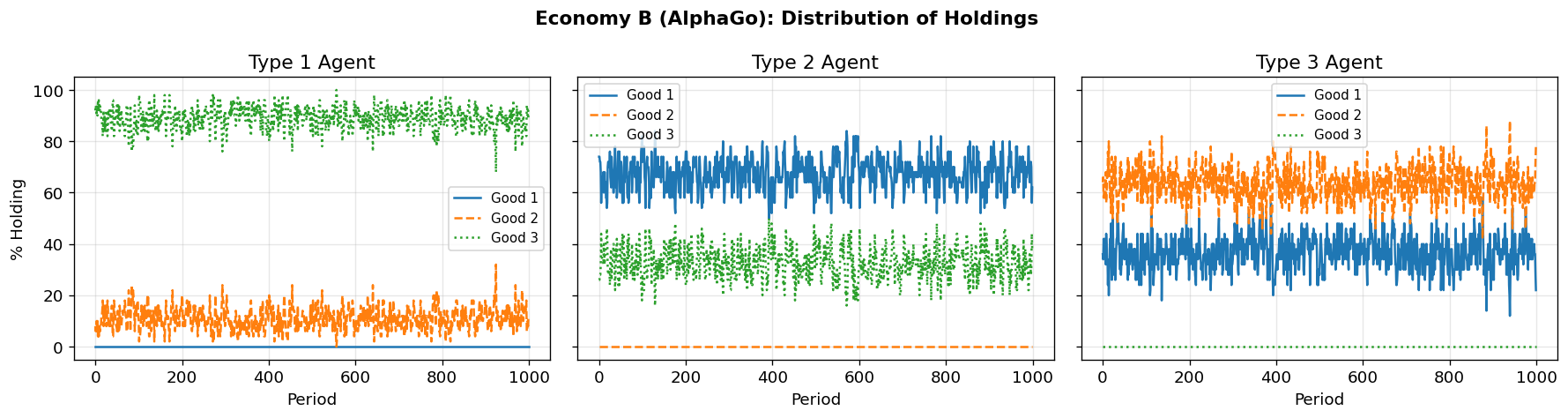

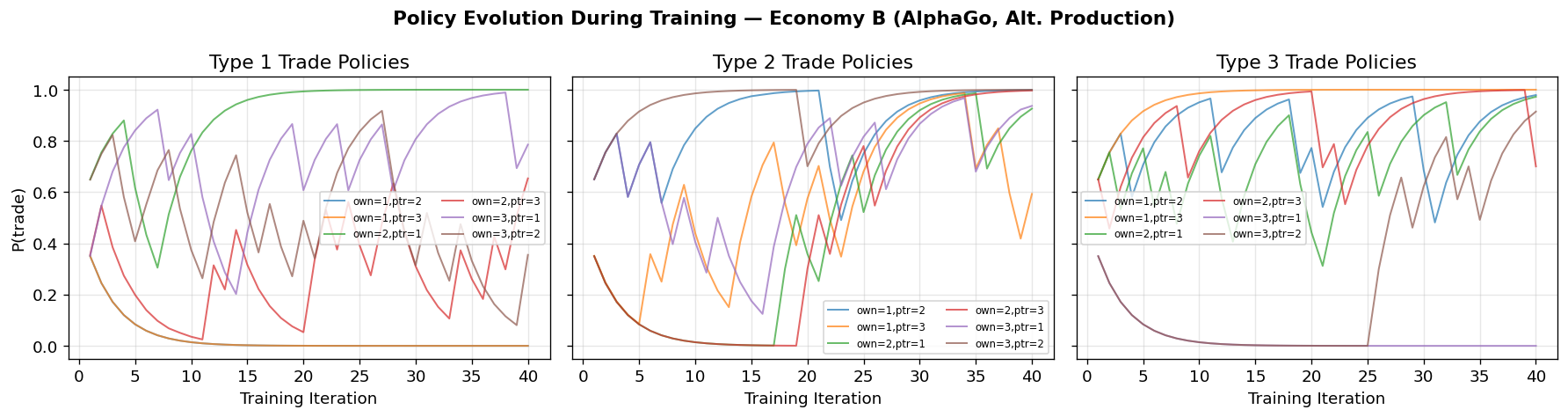

5. Economy B: Alternative Production Structure¶

Economy B uses a different production pattern and storage costs:

Type I produces Good 3, Type II produces Good 1, Type III produces Good 2

Storage costs:

The MMS paper found that the economy initially appears to be in a speculative equilibrium at , but transitions to the fundamental equilibrium by .

# Economy B: Alternative production structure

config_b = EconomyConfig(

name="Economy B (AlphaGo, Alt. Production)",

n_types=3,

n_goods=3,

n_agents_per_type=50,

produces=np.array([2, 0, 1]), # Type 1→Good 3, Type 2→Good 1, Type 3→Good 2

storage_costs=np.array([1.0, 4.0, 9.0]),

utility=np.array([100.0, 100.0, 100.0]),

discount=0.95

)

print("Training AlphaGo agents for Economy B...")

print("=" * 60)

t0 = time.time()

np.random.seed(456)

agents_b, model_b, hist_b = train_alphago_agents(

config_b,

n_iterations=40,

n_eval_periods=300,

n_sims=200,

rollout_depth=15,

c_puct=2.0,

net_epochs=10,

verbose=True

)

print(f"\nTraining complete in {time.time()-t0:.1f}s")

print("\nLearned Trade Policies:")

print_learned_policies(agents_b, config_b)Training AlphaGo agents for Economy B...

============================================================

Iteration 1/40 (ε=0.49) T1:trade=0.35,cons=0.96 T2:trade=0.65,cons=0.96 T3:trade=0.65,cons=0.96

Iteration 2/40 (ε=0.47) T1:trade=0.55,cons=0.98 T2:trade=0.75,cons=0.98 T3:trade=0.46,cons=0.98

Iteration 3/40 (ε=0.46) T1:trade=0.68,cons=0.98 T2:trade=0.83,cons=0.98 T3:trade=0.62,cons=0.98

Iteration 4/40 (ε=0.45) T1:trade=0.78,cons=0.99 T2:trade=0.58,cons=0.99 T3:trade=0.73,cons=0.99

Iteration 5/40 (ε=0.44) T1:trade=0.84,cons=0.99 T2:trade=0.71,cons=0.99 T3:trade=0.81,cons=0.99

Iteration 6/40 (ε=0.42) T1:trade=0.89,cons=0.99 T2:trade=0.79,cons=0.99 T3:trade=0.87,cons=0.99

Iteration 7/40 (ε=0.41) T1:trade=0.92,cons=1.00 T2:trade=0.56,cons=1.00 T3:trade=0.91,cons=1.00

Iteration 8/40 (ε=0.40) T1:trade=0.65,cons=1.00 T2:trade=0.69,cons=1.00 T3:trade=0.94,cons=1.00

Iteration 9/40 (ε=0.39) T1:trade=0.75,cons=1.00 T2:trade=0.78,cons=1.00 T3:trade=0.66,cons=1.00

Iteration 10/40 (ε=0.38) T1:trade=0.83,cons=1.00 T2:trade=0.85,cons=1.00 T3:trade=0.76,cons=1.00

Iteration 11/40 (ε=0.36) T1:trade=0.58,cons=1.00 T2:trade=0.89,cons=1.00 T3:trade=0.83,cons=1.00

Iteration 12/40 (ε=0.35) T1:trade=0.41,cons=1.00 T2:trade=0.93,cons=1.00 T3:trade=0.88,cons=1.00

Iteration 13/40 (ε=0.34) T1:trade=0.29,cons=1.00 T2:trade=0.95,cons=1.00 T3:trade=0.92,cons=1.00

Iteration 14/40 (ε=0.33) T1:trade=0.20,cons=1.00 T2:trade=0.96,cons=1.00 T3:trade=0.94,cons=1.00

Iteration 15/40 (ε=0.31) T1:trade=0.44,cons=1.00 T2:trade=0.97,cons=1.00 T3:trade=0.96,cons=1.00

Iteration 16/40 (ε=0.30) T1:trade=0.61,cons=1.00 T2:trade=0.98,cons=1.00 T3:trade=0.97,cons=1.00

Iteration 17/40 (ε=0.29) T1:trade=0.73,cons=1.00 T2:trade=0.99,cons=1.00 T3:trade=0.98,cons=1.00

Iteration 18/40 (ε=0.28) T1:trade=0.81,cons=1.00 T2:trade=0.99,cons=1.00 T3:trade=0.99,cons=1.00

Iteration 19/40 (ε=0.26) T1:trade=0.87,cons=1.00 T2:trade=0.99,cons=1.00 T3:trade=0.99,cons=1.00

Iteration 20/40 (ε=0.25) T1:trade=0.61,cons=1.00 T2:trade=1.00,cons=1.00 T3:trade=0.99,cons=1.00

Iteration 21/40 (ε=0.24) T1:trade=0.73,cons=1.00 T2:trade=1.00,cons=1.00 T3:trade=0.70,cons=1.00

Iteration 22/40 (ε=0.22) T1:trade=0.81,cons=1.00 T2:trade=0.70,cons=1.00 T3:trade=0.79,cons=1.00

Iteration 23/40 (ε=0.21) T1:trade=0.87,cons=1.00 T2:trade=0.49,cons=1.00 T3:trade=0.55,cons=1.00

Iteration 24/40 (ε=0.20) T1:trade=0.61,cons=1.00 T2:trade=0.64,cons=1.00 T3:trade=0.69,cons=1.00

Iteration 25/40 (ε=0.19) T1:trade=0.73,cons=1.00 T2:trade=0.75,cons=1.00 T3:trade=0.78,cons=1.00

Iteration 26/40 (ε=0.17) T1:trade=0.81,cons=1.00 T2:trade=0.83,cons=1.00 T3:trade=0.85,cons=1.00

Iteration 27/40 (ε=0.16) T1:trade=0.86,cons=1.00 T2:trade=0.88,cons=1.00 T3:trade=0.89,cons=1.00

Iteration 28/40 (ε=0.15) T1:trade=0.61,cons=1.00 T2:trade=0.91,cons=1.00 T3:trade=0.92,cons=1.00

Iteration 29/40 (ε=0.14) T1:trade=0.72,cons=1.00 T2:trade=0.94,cons=1.00 T3:trade=0.95,cons=1.00

Iteration 30/40 (ε=0.12) T1:trade=0.81,cons=1.00 T2:trade=0.96,cons=1.00 T3:trade=0.96,cons=1.00

Iteration 31/40 (ε=0.11) T1:trade=0.87,cons=1.00 T2:trade=0.97,cons=1.00 T3:trade=0.97,cons=1.00

Iteration 32/40 (ε=0.10) T1:trade=0.91,cons=1.00 T2:trade=0.98,cons=1.00 T3:trade=0.98,cons=1.00

Iteration 33/40 (ε=0.10) T1:trade=0.93,cons=1.00 T2:trade=0.99,cons=1.00 T3:trade=0.99,cons=1.00

Iteration 34/40 (ε=0.10) T1:trade=0.95,cons=1.00 T2:trade=0.99,cons=1.00 T3:trade=0.99,cons=1.00

Iteration 35/40 (ε=0.10) T1:trade=0.97,cons=1.00 T2:trade=0.99,cons=1.00 T3:trade=0.99,cons=1.00

Iteration 36/40 (ε=0.10) T1:trade=0.98,cons=1.00 T2:trade=1.00,cons=1.00 T3:trade=1.00,cons=1.00

Iteration 37/40 (ε=0.10) T1:trade=0.98,cons=1.00 T2:trade=1.00,cons=1.00 T3:trade=1.00,cons=1.00

Iteration 38/40 (ε=0.10) T1:trade=0.99,cons=1.00 T2:trade=1.00,cons=1.00 T3:trade=1.00,cons=1.00

Iteration 39/40 (ε=0.10) T1:trade=0.69,cons=1.00 T2:trade=1.00,cons=1.00 T3:trade=1.00,cons=1.00

Iteration 40/40 (ε=0.10) T1:trade=0.79,cons=1.00 T2:trade=1.00,cons=1.00 T3:trade=0.70,cons=1.00

Training complete in 41.5s

Learned Trade Policies:

Type 1 Agent — Learned Trade Policy P(trade=1):

Own \ Partner Good 1 Good 2 Good 3

------------------------------------------

Good 1 0.000 0.000 0.000

Good 2 1.000 0.215 0.654

Good 3 0.786 0.355 0.605

Consume Policy P(consume=1):

Holding Good 1: 1.000

Holding Good 2: 0.135

Holding Good 3: 0.285

Type 2 Agent — Learned Trade Policy P(trade=1):

Own \ Partner Good 1 Good 2 Good 3

------------------------------------------

Good 1 0.928 0.999 0.593

Good 2 0.926 1.000 0.997

Good 3 0.937 1.000 0.906

Consume Policy P(consume=1):

Holding Good 1: 0.121

Holding Good 2: 1.000

Holding Good 3: 0.390

Type 3 Agent — Learned Trade Policy P(trade=1):

Own \ Partner Good 1 Good 2 Good 3

------------------------------------------

Good 1 0.928 0.979 1.000

Good 2 0.973 0.811 0.700

Good 3 0.000 0.915 1.000

Consume Policy P(consume=1):

Holding Good 1: 0.000

Holding Good 2: 0.001

Holding Good 3: 1.000

# Run Economy B

print("Running Economy B with trained AlphaGo agents...")

print("=" * 60)

np.random.seed(42)

sim_b = KWAlphaGoSimulation(config_b, agents_b, seed=42)

sim_b.run(n_periods=1000, verbose=True)

print_holdings_table(sim_b, period_label="at t=1000")

fig_b = plot_holdings_distribution(

sim_b, title="Economy B (AlphaGo): Distribution of Holdings")

plot_policy_evolution(hist_b, config_b);Running Economy B with trained AlphaGo agents...

============================================================

Period 100/1000: Trades= 28, Consumptions= 34

Period 200/1000: Trades= 30, Consumptions= 31

Period 300/1000: Trades= 23, Consumptions= 26

Period 400/1000: Trades= 31, Consumptions= 33

Period 500/1000: Trades= 30, Consumptions= 26

Period 600/1000: Trades= 28, Consumptions= 33

Period 700/1000: Trades= 32, Consumptions= 31

Period 800/1000: Trades= 31, Consumptions= 36

Period 900/1000: Trades= 27, Consumptions= 25

Period 1000/1000: Trades= 27, Consumptions= 26

Holdings Distribution at t=1000

=====================================

π_i^h(j) j=1 j=2 j=3

-------------------------------------

i=1 0.000 0.120 0.880

i=2 0.620 0.000 0.380

i=3 0.220 0.780 0.000

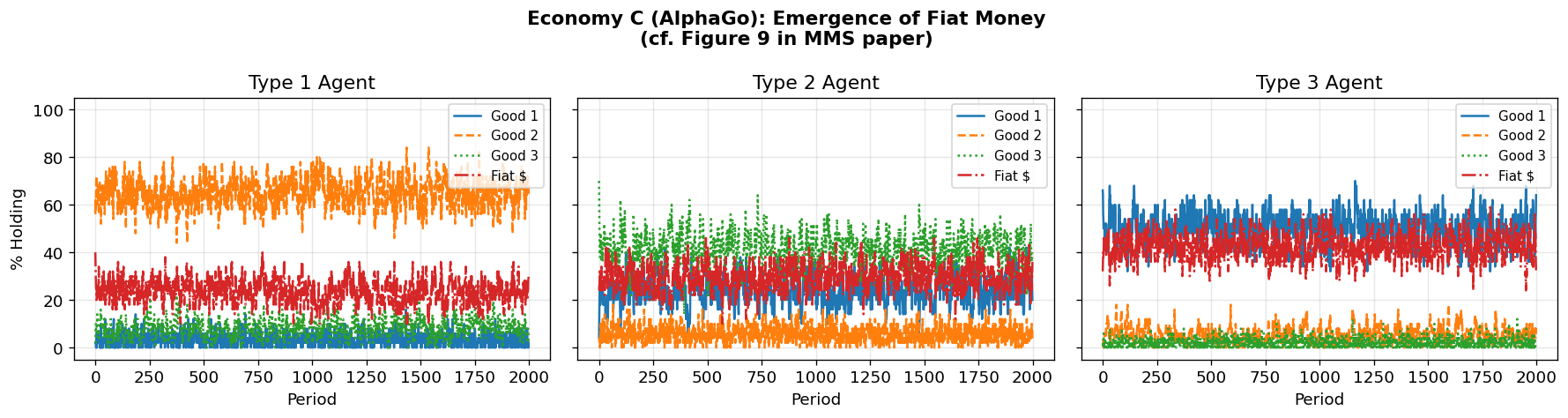

6. Economy C: Fiat Money¶

Economy C adds a fourth good — fiat money — that:

Has zero storage cost ()

Provides no utility to any agent (cannot be consumed)

Is introduced by randomly endowing some agents at

Storage costs:

The MMS paper found remarkably fast convergence: agents learn to use fiat money as a medium of exchange purely because it is costless to store. Can AlphaGo agents make the same discovery?

Note: The neural networks are now slightly larger (4 goods → 8-dimensional trade input, 4-dimensional consume input) but still tiny by modern standards.

# Economy C: Fiat Money (4 goods)

config_c = EconomyConfig(

name="Economy C (AlphaGo, Fiat Money)",

n_types=3,

n_goods=4, # 3 commodities + 1 fiat money

n_agents_per_type=50,

produces=np.array([1, 2, 0]), # Same Wicksell triangle

storage_costs=np.array([9.0, 14.0, 29.0, 0.0]), # Good 4 = fiat ($0 storage)

utility=np.array([100.0, 100.0, 100.0]),

discount=0.95,

n_fiat=48 # Number of fiat money units injected at t=0

)

print("Training AlphaGo agents for Economy C (Fiat Money)...")

print("=" * 60)

t0 = time.time()

np.random.seed(789)

agents_c, model_c, hist_c = train_alphago_agents(

config_c,

n_iterations=40,

n_eval_periods=300,

n_sims=200,

rollout_depth=15,

c_puct=2.0,

net_epochs=10,

verbose=True

)

print(f"\nTraining complete in {time.time()-t0:.1f}s")

print("\nLearned Trade Policies:")

print_learned_policies(agents_c, config_c)Training AlphaGo agents for Economy C (Fiat Money)...

============================================================

Iteration 1/40 (ε=0.49) T1:trade=0.35,cons=0.95 T2:trade=0.65,cons=0.67 T3:trade=0.61,cons=0.96

Iteration 2/40 (ε=0.47) T1:trade=0.55,cons=0.96 T2:trade=0.75,cons=0.47 T3:trade=0.73,cons=0.98

Iteration 3/40 (ε=0.46) T1:trade=0.38,cons=0.97 T2:trade=0.83,cons=0.33 T3:trade=0.81,cons=0.98

Iteration 4/40 (ε=0.45) T1:trade=0.57,cons=0.68 T2:trade=0.59,cons=0.23 T3:trade=0.86,cons=0.99

Iteration 5/40 (ε=0.44) T1:trade=0.70,cons=0.78 T2:trade=0.71,cons=0.16 T3:trade=0.90,cons=0.69

Iteration 6/40 (ε=0.42) T1:trade=0.79,cons=0.84 T2:trade=0.79,cons=0.41 T3:trade=0.93,cons=0.78

Iteration 7/40 (ε=0.41) T1:trade=0.55,cons=0.89 T2:trade=0.85,cons=0.59 T3:trade=0.95,cons=0.85

Iteration 8/40 (ε=0.40) T1:trade=0.69,cons=0.63 T2:trade=0.85,cons=0.70 T3:trade=0.96,cons=0.89

Iteration 9/40 (ε=0.39) T1:trade=0.48,cons=0.70 T2:trade=0.89,cons=0.79 T3:trade=0.97,cons=0.92

Iteration 10/40 (ε=0.38) T1:trade=0.63,cons=0.79 T2:trade=0.62,cons=0.56 T3:trade=0.98,cons=0.93

Iteration 11/40 (ε=0.36) T1:trade=0.74,cons=0.85 T2:trade=0.74,cons=0.39 T3:trade=0.98,cons=0.95

Iteration 12/40 (ε=0.35) T1:trade=0.52,cons=0.60 T2:trade=0.81,cons=0.28 T3:trade=0.99,cons=0.97

Iteration 13/40 (ε=0.34) T1:trade=0.66,cons=0.72 T2:trade=0.86,cons=0.19 T3:trade=0.99,cons=0.98

Iteration 14/40 (ε=0.33) T1:trade=0.46,cons=0.80 T2:trade=0.90,cons=0.14 T3:trade=0.99,cons=0.98

Iteration 15/40 (ε=0.31) T1:trade=0.33,cons=0.86 T2:trade=0.64,cons=0.39 T3:trade=0.99,cons=0.99

Iteration 16/40 (ε=0.30) T1:trade=0.53,cons=0.90 T2:trade=0.74,cons=0.28 T3:trade=0.99,cons=0.99

Iteration 17/40 (ε=0.29) T1:trade=0.67,cons=0.63 T2:trade=0.82,cons=0.20 T3:trade=0.99,cons=0.70

Iteration 18/40 (ε=0.28) T1:trade=0.77,cons=0.74 T2:trade=0.87,cons=0.44 T3:trade=0.99,cons=0.79

Iteration 19/40 (ε=0.26) T1:trade=0.83,cons=0.82 T2:trade=0.91,cons=0.31 T3:trade=0.99,cons=0.85

Iteration 20/40 (ε=0.25) T1:trade=0.88,cons=0.87 T2:trade=0.93,cons=0.52 T3:trade=0.99,cons=0.90

Iteration 21/40 (ε=0.24) T1:trade=0.92,cons=0.91 T2:trade=0.65,cons=0.66 T3:trade=0.99,cons=0.93

Iteration 22/40 (ε=0.22) T1:trade=0.94,cons=0.93 T2:trade=0.75,cons=0.46 T3:trade=0.99,cons=0.95

Iteration 23/40 (ε=0.21) T1:trade=0.96,cons=0.66 T2:trade=0.83,cons=0.62 T3:trade=0.99,cons=0.96

Iteration 24/40 (ε=0.20) T1:trade=0.67,cons=0.76 T2:trade=0.59,cons=0.73 T3:trade=0.99,cons=0.68

Iteration 25/40 (ε=0.19) T1:trade=0.76,cons=0.83 T2:trade=0.42,cons=0.81 T3:trade=0.99,cons=0.77

Iteration 26/40 (ε=0.17) T1:trade=0.83,cons=0.87 T2:trade=0.59,cons=0.85 T3:trade=0.96,cons=0.84

Iteration 27/40 (ε=0.16) T1:trade=0.58,cons=0.88 T2:trade=0.71,cons=0.90 T3:trade=0.97,cons=0.89

Iteration 28/40 (ε=0.15) T1:trade=0.41,cons=0.91 T2:trade=0.50,cons=0.92 T3:trade=0.98,cons=0.92

Iteration 29/40 (ε=0.14) T1:trade=0.59,cons=0.94 T2:trade=0.65,cons=0.65 T3:trade=0.98,cons=0.95

Iteration 30/40 (ε=0.12) T1:trade=0.70,cons=0.95 T2:trade=0.75,cons=0.46 T3:trade=0.99,cons=0.96

Iteration 31/40 (ε=0.11) T1:trade=0.79,cons=0.97 T2:trade=0.82,cons=0.61 T3:trade=0.99,cons=0.97

Iteration 32/40 (ε=0.10) T1:trade=0.55,cons=0.98 T2:trade=0.87,cons=0.72 T3:trade=0.99,cons=0.98

Iteration 33/40 (ε=0.10) T1:trade=0.39,cons=0.98 T2:trade=0.91,cons=0.80 T3:trade=0.99,cons=0.99

Iteration 34/40 (ε=0.10) T1:trade=0.57,cons=0.99 T2:trade=0.64,cons=0.57 T3:trade=0.99,cons=0.99

Iteration 35/40 (ε=0.10) T1:trade=0.70,cons=0.99 T2:trade=0.75,cons=0.69 T3:trade=0.99,cons=0.69

Iteration 36/40 (ε=0.10) T1:trade=0.49,cons=0.99 T2:trade=0.82,cons=0.49 T3:trade=0.99,cons=0.79

Iteration 37/40 (ε=0.10) T1:trade=0.64,cons=0.70 T2:trade=0.87,cons=0.64 T3:trade=0.99,cons=0.85

Iteration 38/40 (ε=0.10) T1:trade=0.45,cons=0.79 T2:trade=0.61,cons=0.75 T3:trade=0.99,cons=0.90

Iteration 39/40 (ε=0.10) T1:trade=0.61,cons=0.55 T2:trade=0.73,cons=0.82 T3:trade=0.99,cons=0.70

Iteration 40/40 (ε=0.10) T1:trade=0.73,cons=0.69 T2:trade=0.81,cons=0.58 T3:trade=0.99,cons=0.79

Training complete in 65.7s

Learned Trade Policies:

Type 1 Agent — Learned Trade Policy P(trade=1):

Own \ Partner Good 1 Good 2 Good 3 Fiat $

---------------------------------------------------

Good 1 0.419 0.038 0.073 0.229

Good 2 0.727 0.597 0.257 0.811

Good 3 0.933 0.977 0.461 0.971

Fiat $ 0.856 0.274 0.139 0.731

Consume Policy P(consume=1):

Holding Good 1: 0.687

Holding Good 2: 0.069

Holding Good 3: 0.533

Holding Fiat $: 0.217

Type 2 Agent — Learned Trade Policy P(trade=1):

Own \ Partner Good 1 Good 2 Good 3 Fiat $

---------------------------------------------------

Good 1 0.739 0.639 0.044 0.648

Good 2 0.226 0.524 0.030 0.700

Good 3 0.341 0.807 0.338 0.714

Fiat $ 0.499 0.505 0.019 0.286

Consume Policy P(consume=1):

Holding Good 1: 0.410

Holding Good 2: 0.576

Holding Good 3: 0.871

Holding Fiat $: 0.931